Incredible! I have actually gotten a submission already! Thank you very much (you know who you are; I am not sure if you wish to break your anonymity, so I did not name you yet)! The experiment is already a huge success. I knew that random submissions would break my system and give me valuable input.

The biggest conclusion is that 1920 words are not enough and there is no way for me to match a preexisting word list with image names. In this first submission, most words were very common, yet there were plenty of them that I did not find in the database. An the submission also includes "neosesquecentiennialism.floor.png":

And a mighty fine visual representation of this concept it is! OK, I have absolutely no idea what this word means. Is it a real word? So the preset word list matching will be abandoned. Rather, I will create the word list on the fly containing only the words that were encountered in the image files. This change should take me a couple of days to implement and then I'll be able to post the first screenshot of the engravings.

There are further conclusions to be drawn from the sizes of the submitted images. I'll talk about this latter, once I decide how I can get the engraving tile both on floors and walls. Maybe applying a skew effect on the fly to create three different tiles?

So engravings are delayed a little while I adapt my system to the conclusion that I managed to draw from the submission. I still accept future submissions. Go wild!

So what else can I talk about? I finished implementing and cleaning up the final version of the structure that the "core" content pack will take. Nice and clean:

Here you can see the XML content descriptor and a few image files. There is still some work left on the matching of tile indexes to tile sheets, but the system is coming along nicely.

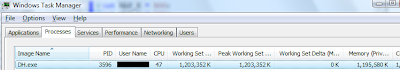

I said in my last post that the new floor system eats up a lot of RAM relatively speaking. And a few posts ago I said I would like to create bigger maps. So I thought I'd do this and see how much memory it would end up eating. With 300x300x40 maps, the RAM consumption is low enough and not a problem at all. But I increased it to 1000x1000x60 and this is the result:

Ouch! Almost 1.2 GiB RAM. Not cool, dude. Sure, 1000x1000x60 is way to big. I tried to play like this and scrolling the map from corner to corner is tedious and you can easily get lost. Zooming out to the maximum helps a lot in finding you way around the landscape, but playing like this can quickly turn into an extended game of zoom-a-weasel. On the other hand, the game runs exactly as smooth as it did with the smaller size, barring the map load time.

So what to do now? I do not know if going all out and implementing a very complex and space efficient map storage is worth the effort. I have an idea that would save data in two steps, that would greatly reduce memory consumption on such huge maps, but the reduction would only apply if the map is not explored. If you explore 100% of the map, memory consumption could get even bigger. Or maybe a less extreme implementation that is more of an optimization and reduces memory consumption to let's say 600 MiB, which is more reasonable. Or try to find a better solution that suffers from none of the weaknesses above? Or just go with what works fine for common map sizes?

What do you think?

That was a typo. I accidentally typed "neosesquecentennialism" when I meant to type "chopping". spellcheck should have caught it.

ReplyDeleteIf the game in its final state takes up less than 1.5 gigs of RAM, I think that'll suit over 95% of the people who would be playing it. If the other 5% can just reduce the map size to get the game playable, then my opinion is that that would be more than acceptable.

This is really something amazing!!! My jaw hit the floor when I saw this. Every original DF tileset has nothing on this! If there is any way I can assist in the development of this game, please let me know! Are you accepting donations? This project cannot die! If you manage to complete this ambitious undertaking then you will be on par with Toady One himself and you will become revered as a titan of gaming!

ReplyDeleteMost people have 4 gigs minimum in their computer so 1.2 or even 2GB won't be a problem. When my web browser is in a bad mood it gobbles up 2GB without me noticing until i try to run a game that needs/wants 2GB. I think optimizing it will be more work than its worth.

ReplyDeleteAs seen in post 42, RAM issues have been solved by using a really aggressive optimization method called IPS.

ReplyDeleteSo while your 2/4 GiB RAM machines won't have problems running the game, neither will people with 128/256 MiB of free RAM (not including OS need).

To Geoff Dunn:

ReplyDeleteThank you for the kind words! I am glad that you found something interesting and I hope that version number 0.1 will be a great first step for this project.

Right now I do not know what someone could do to help the project and I am not accepting donations. Some form of monetization will be introduced eventually to cover some costs, but I am not done researching the legislation involved.